Scala, Play 2.0, CoffeeScript, real-time streams, server-sent events, (lots of) HTML5 canvas, local storage, push state, easter egg, ...

If you've worked in IT for long enough, you make sure you get yourself in a position where you no longer have to work on dull projects. It's hard (dull projects are often very lucrative - either for you or your boss) - but once you've ditched the boring projects there's no turning back.

With dull projects out of the picture you get to spend your days tinkering on good projects, or if you're lucky - interesting projects. However. Every once in a long while you have the rare opportunity to be involved in the developer's holy grail: Super-Good Interesting projects. And the recently-released Typesafe Console was one of those Super-Good Interesting projects. Here's the story...

The Story

CoffeeScript: right or wrong?

Server-Sent Events and Comet

Canvas vs SVG vs DOM

All things HTML5

Pushin' it live

(friends don't let friends use) Third-party libraries.

The Story

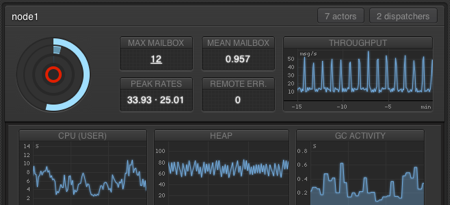

The chaps at Typesafe were looking to create a front end to their impressively powerful Akka actors monitoring system (via their exposed API). The idea was to create a visual tool for learning about Akka, for monitoring systems, and for looking really really cool. The release of the Play 2.0 framework - with all it's Scala-ry goodness - was imminent, and thanks to it's Iteratee-based IO architecture was a perfect tool for the job.

Maxime, Zenexity's Senior Head of Inventing Futuristic Stuff developed the underlying concepts of the system (which I think I managed to not destroy completely) and Anthony, Zenexity's Chief Officer of Making Stuff Look Funky took the concepts and ran with them all the way to the gaming grid.

Next, we had to make things actually work. Which meant making the tough decisions: CoffeeScript, or JavaScript?

CoffeeScript: right or wrong?

I chose to go with CoffeeScript for everything. I like CoffeeScript, but I love JavaScript - so I spent much time weighing up the pros and cons.

The Pros: there is built-in support for CoffeeScript in Play 2.0, and writing CoffeeScript is damn fun. The syntax is nice (heck, it had me at "short function syntax with implicit returns"), and there are a bunch of useful destructuring features. It has a super-simple classical inheritance component, and you can write data?.node?.maxThreadCount to soak up nulls - plus a whole bunch more.

The Cons: it's picky about whitespace, without being strict (many-a-times you need to check the compiled source to see if you need to add or remove a tab - especially when chaining methods, doing anonymous functions with callbacks, or reduce functions), it's a transpiled language so you either trust that the compiled source is efficient enough for updating a zillion graphs 60 times a second or you fully understand what it's doing so don't really need it anyway, and many of its cool features are being rolled into JS.Next (hopefully many) - so 5 years down the track developers will be depressed when they have to maintain a weird old language: and good luck hunting down the correct version of the compiler (note to self: package correct version of the compiler in the repo).

Buuut, in the end I gave the thumbs up to CoffeeScript. Did I mention it's fun?

Server-Sent Events and Comet

With the core front-end language decided on it was time to look at how to talk to the back end. The initial "lets just get something up and running" version used long polling to call the server, which proxied the request through to the Akka service. This was obviously not going to cut it: it's so, old school... so it was time to get serious.

Erwan had recently made a cool sample Play app that consumed Twitter's real-time stream and composed it with a comet stream output (using the ><> "poisson" (fish) operator!) and displayed them at a crazy rate - like, 1000 tweets a second for very common search terms.

Sébastien had ported our Play 1.2 app over to Play 2.0 - so Bridging the Akka API with composable streams via iteratees and enumeratees made perfect sense for the application. It meant we could specify, say, a stream of nodes and compose them with a stream of system information. When the user clicks on a navigation item, we just change streams. If we want to display more panels, we can just compose more streams: it can be as modular as we need.

The only potential issue was that when there were a number of users all looking at the same thing (such as the default view) then separate streams would be generated, which means separate calls to the Akka API, which would be extremely wasteful. Thankfully Sadek Drobi, co-genius behind the core architecture of Play, came up with a cool solution of "hubbed iteratees" which allowed receivers to be plugged into existing streams. This greatly reduced the number of API calls needed and had the added bonus giving us synchronized views when looking at the same streams (minus network latency).

The comet streaming was working like a charm - but we were a bit uneasy about basing everything off comet. It's really a hack - and so it was time to convert things over to the non-hack equivalent: Server-Sent Events. It's really just paving the comet cowpath - we added 5 lines of code, an additional conditional check in the JavaScript, et voila: Server-Sent Events with comet fallback.

Canvas vs SVG vs DOM

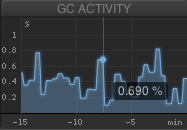

From the outset we wanted some wacky graphs (like the node and the dispatcher visualisations) so you could quickly grok how your system was doing. Using a pre-existing library wasn't really an option - especially when we weren't certain about how we wanted to render: canvas, SVG, or plain-ol' DOM elements with CSS transitions.

From the outset we wanted some wacky graphs (like the node and the dispatcher visualisations) so you could quickly grok how your system was doing. Using a pre-existing library wasn't really an option - especially when we weren't certain about how we wanted to render: canvas, SVG, or plain-ol' DOM elements with CSS transitions.

All were trialled (SVG was using Raphaël, naturally). Canvas came out the winner thanks to it's flexibility to paint whatever we wanted easily (over the course of the project the graphs changed entirely, many times!) and performance in modern browsers was acceptable.

Aside from some mouseover information and a couple of clickable areas, there is not a lot of user interaction with the graph elements - if this was required then SVG (or DOM) probably would have won out.

Once we'd figured out and mocked up a bunch of graphs, @greweb carved everything up into a flexible, reusable charting component library - using multiple canvas layers per graph, and a cool contraints system for putting everything together. All graphs were then assembled out of the components.

All things HTML5

Along the way a whole host of incidental "HTML5 et al." technologies got dragged into the mix: the requestAnimationFrame for animating (note: don't ignore the second parameter to the call - it's the element that you want to animate, and specifying it speeds things up a lot), push state for managing history, local storage for temporary caching of graph data (so things reload quickly if you're navigating around), the Event Source object, map/reduce and friends other goodies from JavaScript 1.8... I tell you, it's good times to be a developer.

Pushin' it live

Although everything was ticking along nicely on our local versions - we also needed to provide a demo app that would be publicly accessible. By the public. All of them. Which is a very different use-case than the console's primary purpose - which is to monitor a system by, maybe, a handful of sys-admins/developers at a time.

Sadek's hubbed iteratee solution scaled beautifully (*cough* in theory, and simulated load tests *cough*) - but there was one potential bottleneck (that kept me awake at night for a few days before the release)... bandwidth. The console is all about pushing tonnes of real-time data into your browser. And tonnes of data, multiplied by every second, multiplied by thousands of simultaneous users, equals a. lot. of. bandwidth.

Sadek's hubbed iteratee solution scaled beautifully (*cough* in theory, and simulated load tests *cough*) - but there was one potential bottleneck (that kept me awake at night for a few days before the release)... bandwidth. The console is all about pushing tonnes of real-time data into your browser. And tonnes of data, multiplied by every second, multiplied by thousands of simultaneous users, equals a. lot. of. bandwidth.

Thankfully, I was not in charge of making sure thing worked in the real world. Our resident Head of Making Things Stay Online - Jean-François - was. He set up a small cluster of cloudy machines, with the ability to bring new machines online in 5 minutes, if necessary.

When things went live, I sat there cautiously checking if things were still running. Jean-François didn't bat an eyelid. I was nervous that the untested-under-heavy-load-in-the-field streams would fall over as the hundreds of connections became thousands - and we'd need to bring a whole bunch of (expensive) machines up. After a few hours the bandwidth really started exploding and each reload of the page would give me mild heart-attacks... I asked Jean-François if he thought we'd be okay. He calmed me down by replying:

It looks like we deployed a network of military satellites to make a sub-orbital nuke against a pigeon.

He's a professional.

(friends don't let friends use) Third-party libraries

After things settled down, we could step back and do a bit of a post-mortem. Looking at the package we realised that we were including just two third-party libraries: jQuery for DOM manipulation (yeah, it's becoming less essential over time, but it's still so convenient), and Spin.js to add a lil' spinner when things are loading... and that's all. We didn't need an MVC-esque library (streams are dispatched via a simple message dispatcher - anything more would have been overkill), and CoffeeScript gave us a basic class implementation, which was all we needed.

We had the rare benefit of only having to target modern browsers - and when this is the case then piling on libraries isn't necessary: the core (CSS/JS), HTML5, and related technologies underneath them are already awesome, easy to use, and really, really, really fun to develop with. With the emergence of highly scalable, reactive server-side technologies like Akka and Play 2.0 - combined with the cool features that are filtering into browsers all over the globe - I personally am pretty excited to see what revolutionary stuff people come up with in the next few years.

And I'm going to do my best to make sure I'm working on them, too.

5 Comments

I like how your article shows the close development between Play2 and Akka Console.

Akka Console helped Play2 development.

Play2 core developers were able to benchmark and improve the framework by testing how it runs with Akka Console and other example apps.

Play2 also helped Akka Console development.

We had our -real world application- needs. Sadek and Guillaume has found solutions and have generalized them in Play2 like this Hub concept.

Thanks for writing this up. What’s that akka actor monitoring API you are talking about?

Hello

Thanks for the great write-up. Any chance the “hubbed iteratees” is open source?

Thanks

-Rao Venu

Hey Rao, they kind of secretly snuck into play at the last moment – the documentation is coming, but check out the comments at the bottom of this post for more deatils for now!

http://blog.greweb.fr/2012/03/play-painter-how-ive-improved-the-30-minutes-prototyped-version/

Hi,

I think the typesafe console looks amazing. Are there any other resources detailing the making of? I’m very interested.

Regards,